The Next Frontier: Top 6 AI Platforms Shaping the World in 2026

From Generative Chatbots to Embodied Intelligence: A Deep Dive into the Systems Redefining Autonomy, Aviation, and Global Logistics.

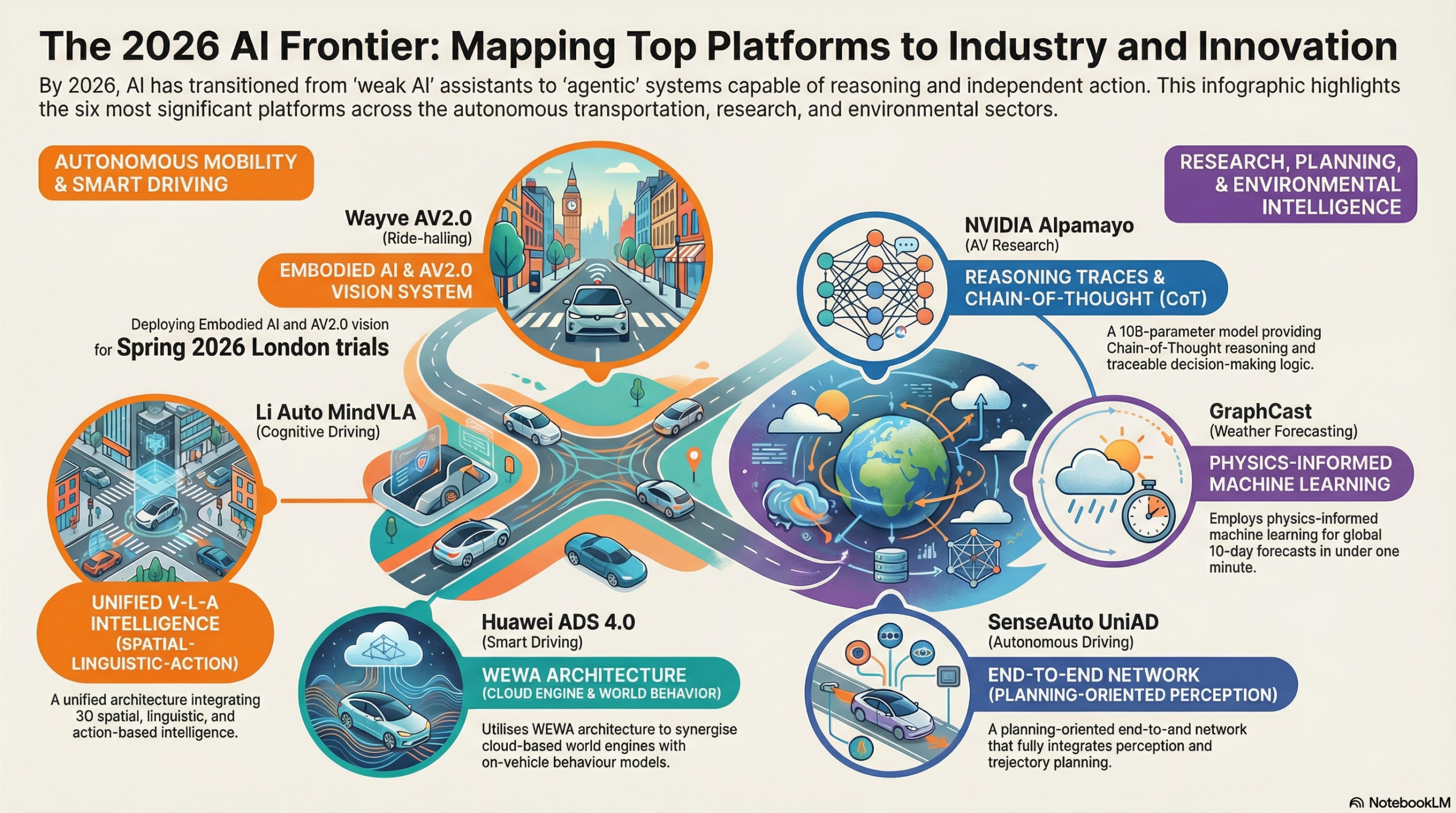

The exploratory age of AI has ended. In 2026, the industry has moved beyond the ‘chatbot’ hype cycle into the era of Embodied AI and Agentic Systems—the industrialization of intelligence. These systems no longer merely predict text; they reason, navigate physical space, and orchestrate logic within the high-stakes corridors of global industry.

📌 Key Takeaways: The 2026 Landscape

- From Chat to Action: AI has moved beyond text generation into “Embodied AI,” where systems interact with and navigate the physical world with human-like spatial awareness and tactile responsiveness.

- Reasoning-First Architectures: Platforms like NVIDIA Alpamayo and SenseAuto UniAD are replacing “black-box” decisions with transparent “Chain-of-Thought” reasoning traces, allowing for auditable decision-making.

- The End of HD Maps: Wayve and Li Auto are proving that intelligence—not pre-mapped data—is the key to global scalability, enabling “zero-shot” driving in thousands of cities without expensive geographic tethering.

- Physics-Informed Forecasting: Google DeepMind’s GraphCast is disrupting decades of supercomputing with AI that predicts weather in seconds, providing a new layer of resilience for global supply chains.

- Legal Foundations: Legislation like the UK’s AV Act 2024 is providing the liability frameworks necessary for commercial deployment by defining the role of the “Authorised Self-Driving Entity” and shifting responsibility to manufacturers.

The Great Transition: Why 2026 is the Year of Embodied Intelligence

The “exploratory phase” of AI is officially over. We have entered the era of deployment, where the “ChatGPT moment” has finally hit the physical realm. Systems are no longer following rigid, pre-programmed code; they are thinking through scenarios much like humans do. This transition represents a move toward Causal Reasoning, where the AI understands why it is taking an action—such as slowing down because it recognizes a ball in the street implies a child might follow—rather than just identifying a statistical pattern in pixels.

Core Capability 2024 (Generative AI) 2026 (Embodied/Agentic AI) Interaction Conversational & Textual Physical & Action-Oriented Logic Basis Statistical Pattern Matching Causal Chain-of-Thought (CoT) Navigation Static High-Definition Maps Dynamic Environmental Learning Safety Hook Direct Human Oversight Reasoning-Based Audit Trails Core Data Internet Text/Static Images Multi-sensor Physical Streams

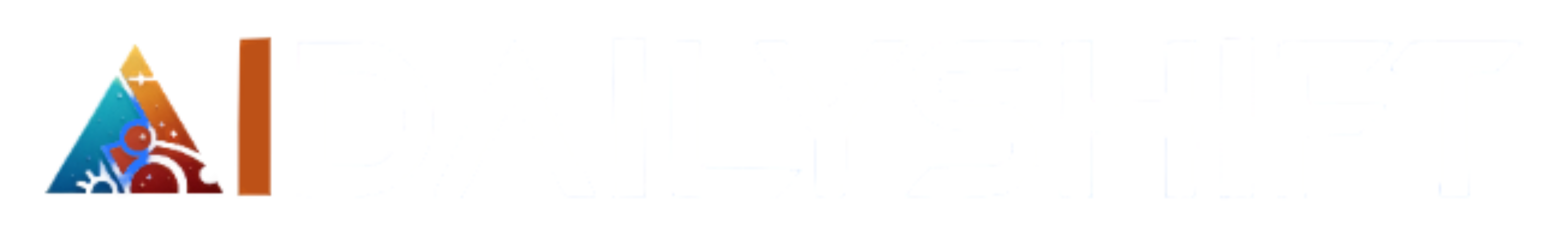

1. NVIDIA Alpamayo: The “Thinking” Physical AI

To understand how this shift is manifesting in the market, we must look at the six architectural leaders redefining these boundaries—starting with the silicon giant that provided the foundation for physical reasoning. Driving isn’t just about vision; it’s about judgment. NVIDIA Alpamayo is the industry’s first open-source reasoning Vision-Language-Action (VLA) model designed to bridge the gap between perception and policy.

The Architecture of Reason

Unlike traditional models that merely react to data, Alpamayo uses a (10 billion) parameter architecture to generate driving trajectories alongside “reasoning traces.” Built on the NVIDIA Cosmos Reason backbone, the model interprets video input and literally “thinks out loud” in its internal logic.

If a car nudges left to avoid a construction cone, Alpamayo provides a textual explanation: “Detecting construction zone; shifting 0.5m left to maintain safety buffer while avoiding oncoming traffic.” This transparency is critical for passing the “self-driving test” mandated by new global regulations, as it moves the industry away from uninterpretable “black-box” systems toward human-centric accountability.

2. Wayve AV2.0: Scalable, Map-less Autonomy

While others focus on mapping the world, Wayve is teaching AI to drive anywhere. Their “Embodied AI” philosophy relies on pure intelligence rather than expensive, static High-Definition (HD) maps which quickly become obsolete.

The London Robotaxi Milestone

In early 2026, Wayve secured $1.5 billion in Series D funding—the largest investment in UK AI history—to launch commercial robotaxi trials in London. This “capital-light” model is revolutionary: Wayve provides the “AI Driver” (the software brain), while Uber operates the physical fleet. This structure mimics the software-licensing models seen in the smartphone industry, positioning Wayve as the foundational operating system for global mobility.

3. Amazon & Zoox: Purpose-Built Urban Mobility

While companies like Tesla and Waymo focus on modifying existing car designs, Amazon’s Zoox has taken a radically different approach: building the vehicle from the ground up to be a robotaxi, with no steering wheel, pedals, or front/back orientation.

Bi-Directional Intelligence and “Scenario Diffusion”

The 2026 rollout of Zoox in Las Vegas and San Francisco highlights its unique bi-directional capability. The vehicle can drive in either direction with equal performance, eliminating the need for complex U-turns in narrow urban streets.

Technically, Zoox’s safety edge comes from Scenario Diffusion. Using massive AWS compute clusters, Zoox generates millions of “synthetic” safety-critical scenarios (e.g., a child running out between two parked trucks). The AI “hallucinates” these rare events to train its perception systems, achieving a level of preparedness that real-world testing alone could never reach.

The Passenger Experience

The Zoox cabin is designed for social interaction rather than driving. It features face-to-face seating for four passengers, individual climate controls, and a “horseshoe” airbag system that wraps around passengers in an emergency. In 2026, Amazon has integrated Zoox into the broader Prime ecosystem, allowing members in select cities to summon a robotaxi via the same app they use for shopping and entertainment.

4. Li Auto MindVLA: The Multimodal Generalist

Chinese innovator Li Auto has transitioned to the MindVLA architecture—a system that unifies spatial, linguistic, and action-based intelligence into a single, cohesive “Cognitive Generalist.”

- V-Spatial Intelligence: Employs 3D Gaussian Splatting—a technique that renders complex environments as a collection of 3D ‘blurs’ rather than rigid polygons. This allows the vehicle to perceive the ‘soft edges’ and volumetric depth of objects like overhanging branches or steam, which traditional bounding boxes often misidentify.

- L-Linguistic Intelligence: Integrates MindGPT, enabling natural language interaction. Drivers can give complex, vague commands like “Stop near the entrance where it’s not too crowded.”

- A-Action Policy: Uses a diffusion-based decoder to generate smooth, human-like trajectories, ensuring the ride is free of the jerky movements typical of earlier robotic systems.

5. Huawei ADS 4.0: The WEWA Architecture

Huawei’s ADS 4.0 introduces the WEWA (World Engine + World Action) architecture, representing a massive leap in how we solve “long-tail” safety problems.

- World Engine (WE): A cloud-based generative system that uses diffusion models to “invent” extreme scenarios at a density 1,000 times higher than real-world data collection.

- CAS 4.0 & XMC Engine: The “five-dimensional” collision avoidance system provides safety across “all targets, all speeds, all directions, all scenarios, and all weather.” Paired with the XMC digital chassis, it can stabilize a vehicle even during a high-speed tire blowout.

6. Google DeepMind GraphCast: Weather at AI Speed

In the realm of global safety, GraphCast has officially outperformed traditional Numerical Weather Prediction (NWP) methods.

- Speed: Generates a 10-day global forecast in under one minute on a single Google TPU v4 chip.

- GenCast (Probabilistic Forecasting): The successor, GenCast, generates an ensemble of 50+ individual predictions. This allows disaster relief agencies to see a precise “cone of uncertainty” for hurricanes, quantifying the risk of catastrophic events with 97.2% better accuracy.

7. SenseAuto UniAD: Planning-Oriented Driving

SenseAuto’s UniAD framework integrates perception, prediction, and planning into a single, unified network.

- Eliminating Cascading Errors: In conventional systems, perception errors cascade. UniAD fixes this by making the entire network “planning-oriented.”

- Result: UniAD reduced motion forecasting error by 38% and planning error by 28%, resulting in a ride that feels decisively human-like.

Navigating the Regulatory Landscape

Innovation in 2026 is supported by the UK Automated Vehicles (AV) Act 2024, which clarifies that responsibility shifts from the human driver to the Authorised Self-Driving Entity (ASDE).

In aviation, the CAA’s CAP3064 framework is enabling Agentic AI in airport operations. These systems move beyond simple alerts to “Goal-Driven Orchestration,” autonomously dispatching replacement shuttles or re-routing connecting passengers during disruptions.

Final Thoughts: The Road to 2027

The AI landscape of 2026 is no longer a peripheral digital tool; it is the operating system of the physical world. As we move from speculative ‘GenAI’ to coordinated ‘Embodied Intelligence,’ the strategic advantage shifts to those who can master the intersection of silicon logic and physical action. The era of the automated world has arrived—the only question is whether your operational framework is ready to be governed by reason.

🔍 FAQ: The 2026 Self-Driving Landscape

Q: Are there any consumer cars I can buy in 2026 that are truly “hands-off, eyes-off” everywhere?

A: No. While Level 3 systems (like those from Mercedes and BMW) allow you to take your eyes off the road in specific conditions like highway traffic jams, no consumer vehicle offers Level 5 (unconstrained) autonomy yet. Level 4 (fully driverless) is currently limited to geofenced robotaxi services like Waymo and Zoox.

Q: What is the difference between Level 2+ and Level 3?

A: Level 2+ requires you to keep your eyes on the road at all times, even if your hands are off. Level 3 is the “eyes-off” threshold where the car handles the driving, but you must be ready to take over if the system issues a transition request (typically with a 10-60 second warning).

Q: How do robotaxis handle “long-tail” events like extreme weather or road construction?

A: Platforms now use “Scenario Diffusion” (see the Zoox section) to train on millions of simulated edge cases. Additionally, many fleets use remote assistance—where a human operator in a command center can “see” through the car’s cameras and give it a hint (like “it’s okay to cross the double yellow line to go around this debris”) without actually steering the car.

Q: What happens if a self-driving car gets into an accident? Who is at fault?

A: Under the 2026 legal frameworks (like the UK AV Act), if the vehicle was in “authorized autonomous mode,” the liability shifts to the manufacturer or the “Authorised Self-Driving Entity.” The person in the car is considered a “user-in-charge” rather than a driver and is generally protected from driving-related criminal liability.

Q: Can Zoox really drive at night or in the rain?

A: Yes. As of 2026, Zoox has expanded its operational design domain to include night driving and light-to-moderate rain. It uses a fusion of LiDAR, Radar, and Longwave-Infrared cameras to “see” heat signatures of pedestrians even in zero-light conditions.

📚 Further Reading

- NVIDIA Alpamayo Official Release – Full context on the industry’s first open-source reasoning VLA model: nvidianews.nvidia.com/news/alpamayo-autonomous-vehicle-development

- Wayve & Uber London Partnership – A closer look at the 2026 robotaxi trials and map-less driving: just-auto.com/news/wayve-uber-to-start-level-4-vehicle-trial/

- Huawei ADS 4.0 Technical Deep Dive – Comprehensive data on WEWA architecture and CAS 4.0 safety: autonews.gasgoo.com/articles/news/huawei-qiankun-intelligent-driving-moving-another-step-forward-2013967408870301697

- Google DeepMind GraphCast Research – Foundational Science journal article on AI-based weather forecasting: deepmind.google/blog/graphcast-ai-model-for-faster-and-more-accurate-global-weather-forecasting/

- SenseAuto UniAD Best Paper – The original research paper introducing the “planning-oriented” framework: sensetime.com/en/news-detail/51166787

- UK Automated Vehicles Act 2024 – Official legislative framework: legislation.gov.uk/ukpga/2024/10/contents